My Homelab Upgrade 21'

Table of Contents

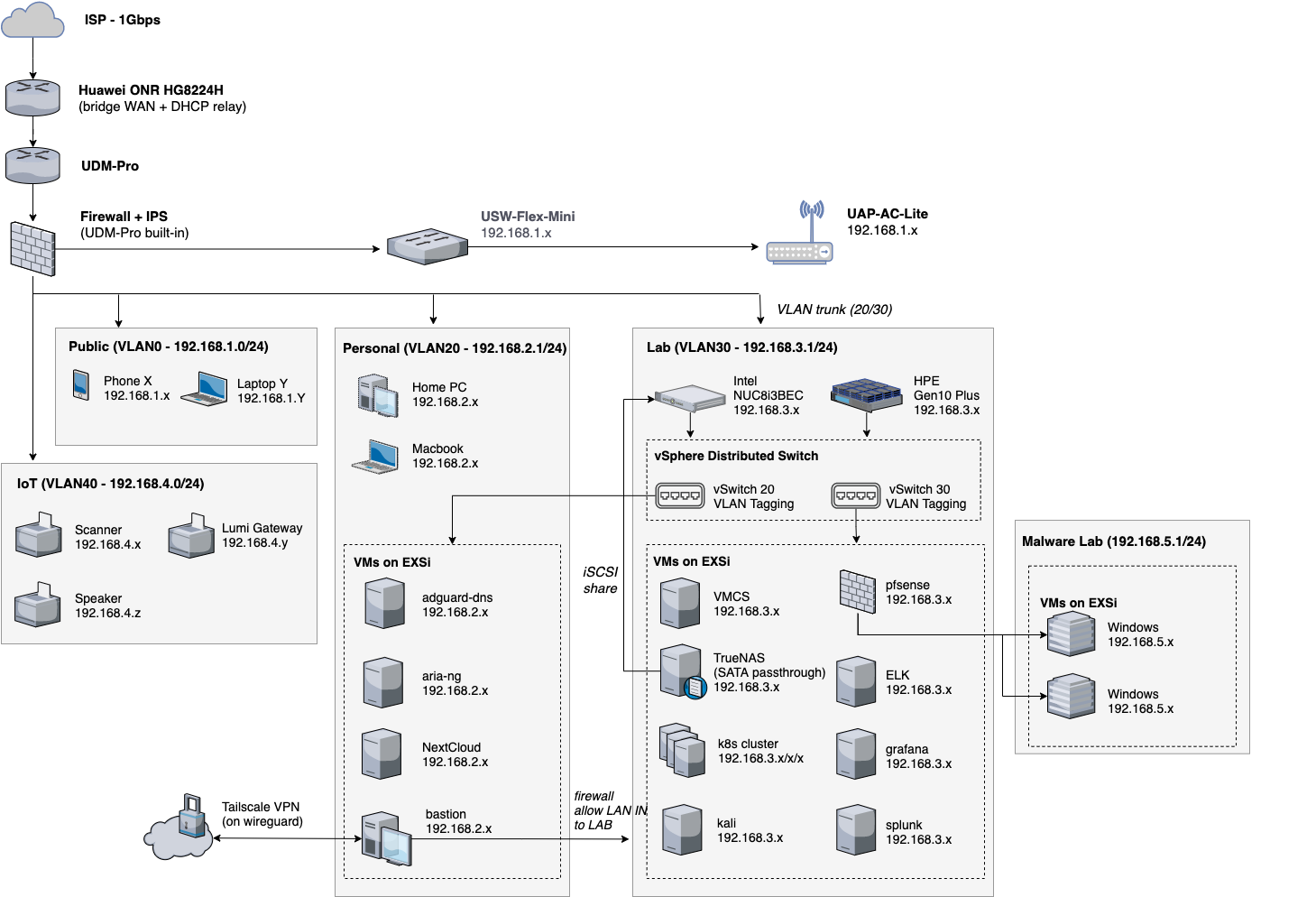

Dec-2023 Update: Lots of things have happened over the pandemic and obviously the lab has also gained some weight! I haven’t found time to write an updated post on the new setup but here’s a quick preview of how it looks like now.

*"Nah human, that's not what I asked for"*

“So I heard you had a homelab…” #

If you happen to know me in real life, you probably have heard me nagging about my homelab at some point in time. It started off two years ago as a simple Intel NUC box that allowed me to play around with VMs on ESXi. As time goes by (certainly that’s the only few things in surplus during this pandemic), the setup has gradually morphed into a full-fledged homelab, which I’d like to share in this post.

My *homelab* in 2018. Back then it was just a tiny box sitting silently under the monitor

This year’s new setup with HPE Gen 10 Plus and Ubiquiti UDM. Not particularly aesthetically pleasing but hey, the IKEA rack works

I used to have a typical home network like everyone else: a single WiFi router in the living room, boosted by an extender at the far end of the house. The NUC was directly connected to the router through an ethernet cable and there were zero network isolation whatsoever. I didn’t really like the setup because

- There wasn’t a firewall + VLAN to isolate the traffic. This leaves my homelab services exposed/ vulnerable IoT devices being used as attack entry points / greedy apps and hardware to fingerprint the intranet / my misconfigured malware lab to ruin everyone’s life in the family

- I had no visibility into what’s happening in the home network. As someone who used to worked on detection, I want to be the first to know if there’s an intrusion

- The WiFi extender didn’t support seamless roaming so I had to manually switch between SSIDs if I walked into a room where the signal from the extender is stronger.

- The Intel NUC8i3BEH had only a 2-core CPU (i3-8109U) plus 16G RAM. It couldn’t run more demanding workloads e.g. ELK or VMWare vCenter. I also had to shut down VMs from time to time to save RAM for new stuff I wanted to try out.

So what’s the solution? Although I’m a big fan of server racks (r/homelab, you know what I’m talking about), I wished I could have a small form factor setup that’s both quiet and maintainable. After all, having fun with the homelab is one thing, but having a server farm that makes you feel like another day job is another story…

So after a few months’ trial-and-error, I finally settled with this homelab design that made me satisfied.

Rack and Devices #

Having decided not to buy rackmount units, it was a lot easier to choose the device. For the network upgrade, I picked

- Ubiquiti UniFi Dream Machine UDM as the router. UDM is an all-in-one model that combines UniFi Controller, firewall, IDS/IPS, DPI, VLAN into a single box. IMO, Ubiquiti has really nailed it. The UDM provides enterprise-grade features while still keeps the setup experience almost plug-in-and-play.

- Ubiquiti USW Flex Mini Managed 4-port Switch as the access switch for my desktop

- Ubiquiti UAC-AC-Lite as the wireless AC

For the new lab server, I hesitated among HPE Gen10 Plus, another Intel NUC, or Dell R720. R720 was quickly out of the picture after seeing people complain about how loud the fan noise was (not particularly a problem for a 1U/2U servers in the data centre, but definitely not living-room-friendly). Adding another NUC also seemed feasible, but it fell short on limited hard disk slot and no ECC memory support.

In the end, I picked an HPE Gen10 Plus from Amazon US. The default config came with Xeon E-2224 CPU and 16GB RAM. I brought some additional parts on top of that to make full use of the machine.

- 5* Seagate Ironwolf 4TB HDDs to build ZFS RAIDz2

- a 32GB Hynix DDR4 ECC memory to expand the RAM. (different sizes of RAM only works in flex model, but given my workload are mostly memory bound, I’m happy to trade performance for the money saved.)

- an iLO 5 ProLiant board to enable out-of-band(OOB) management

- a QNAP QM2-2P-344 Dual M.2 PCIe SSD Expansion Card to give Gen10 Plus two M.2 SSD slots

- an M.2 PCIe 250GB HP EX900 SSD as the ESXi host disk

*The Internal of HPE Gen10 Plus. Why you only see 4 HDDs in the photo? Because an HDD slipped out of my hand while I was driving the mounting screw. A $200 lesson that reminds you how fragile HDD is.

The iLO5 management card that grants remote console access and many add-on features. The card comes with an OOB network port, but you can configure the iLO web console to be accessed from port 1 on the server NIC.

The devices were ready. What about messy cables? Buying a $200 15kg metal server enclosure with patch panels to hide the clutter obviously doesn’t seem like a clever idea. Luckily I found this $5 IKEA GREJIG shoe rack. It worked like a charm with cable ties.

A poor man’s cable management system. Made in Sweden

The power socket fits nicely on the side of the rack

Networking #

Now comes my favourite part, the homelab. To keep this post short and concise, I’d like to run through the network from the edge to the end server, and highlight some design rationales and trade-offs along the way.

Uplink and Edge Router #

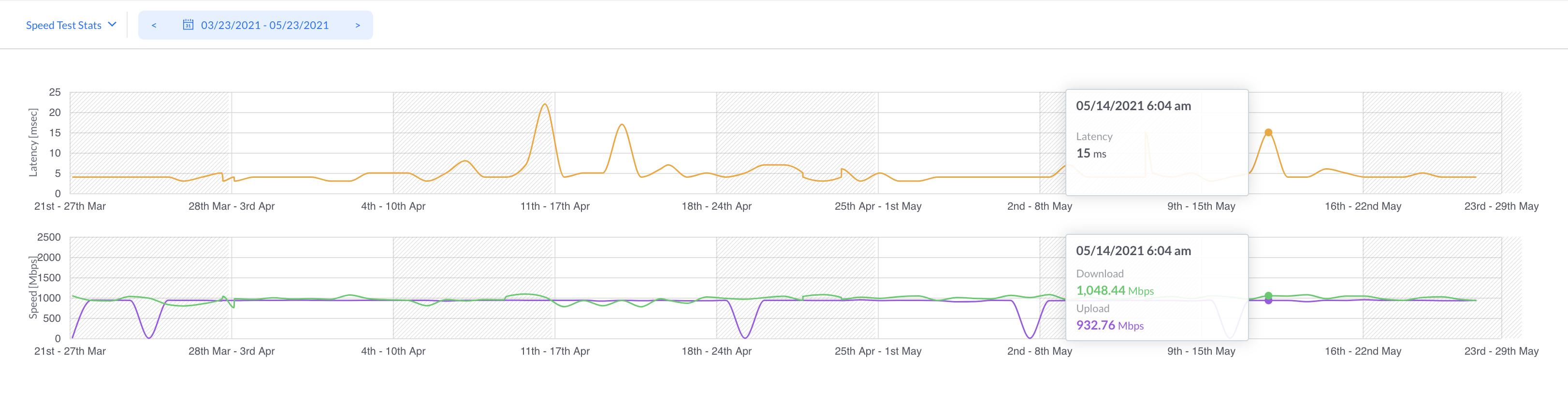

My house has the cheapest-in-town 1 Gbps subscription from WhizComms. WhizComms is a local virtual ISP (AS135600) that peers with Singtel (AS9506) and resell its broadband services. How good is it? Well, their link quality isn’t stable but it shouldn’t be noticeable by most of the users.

WhizComms’s plan comes with a Sintel Optical Network Router(ONR). By default, the ONR works in router mode which gets a public IP for itself. If you connect another router behind the ONR, it becomes a nasty double NAT topology which you may want to avoid at all cost. To fix it, I configured the ONR to run in WAN bridge mode and enabled DHCP relay as per @lffl’s tutorial. This allowed my UDM to directly get a public IP through DHCP, and become the edge router of the entire network.

Firewall and segmentation #

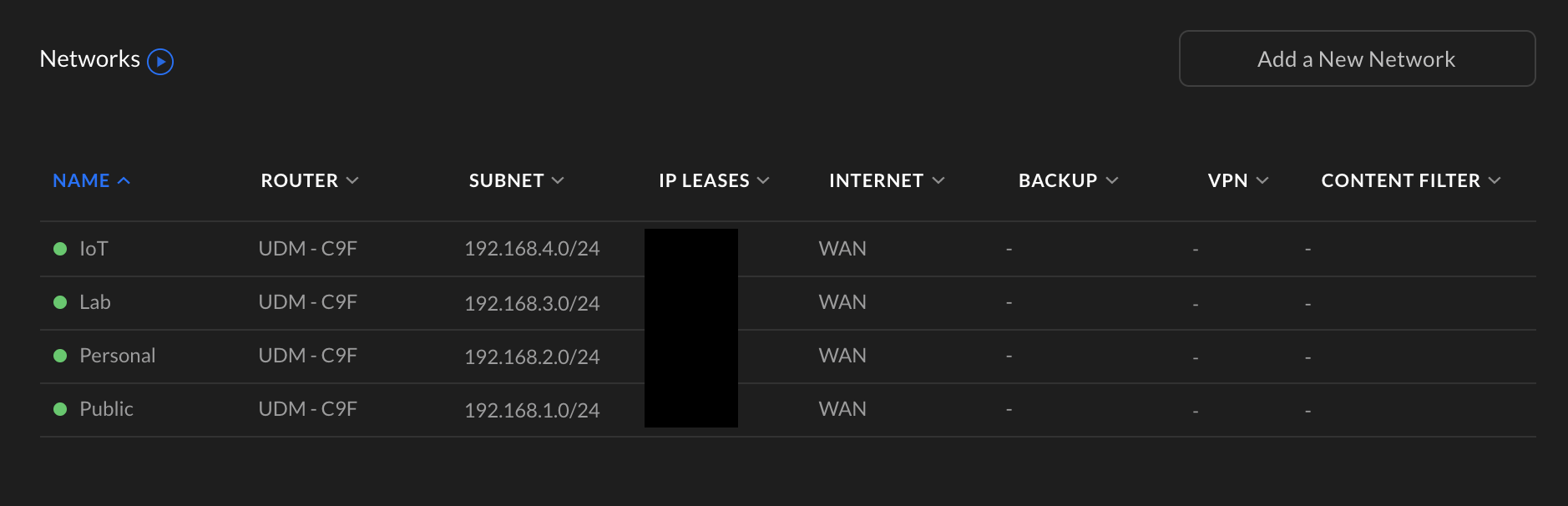

Using UDM as the router, I finally could isolate my network by VLANs and apply proper firewall rules to regulate access. One thing that I like the most about UDM is its ability to broadcast multiple wireless SSIDs and bind them to a specific VLAN. I can safely connect IoT devices, e.g. the vacuum robot to an IoT WiFi and rest assured that the robot won’t anyone else in the network.

The VLAN configuration on UDM

But the IoTs have also caused the most trouble. There were two major issues if you put them in an isolated network.

- Most IoTs rely on protocols like mDNS for service discovery. By default, mDNS doesn’t flow between VLANs, so you’ll likely to see your Spotify app stop streaming to your wireless speakers. To fix it, you’ll need to enable a multicast DNS reflector on the router (luckily UDM has one) and create a firewall exception to allow the traffic.

- Some devices don’t support band steering (a shared SSID for both 2.4GHz and 5GHz network). You’ll have to disable band steering and turn off the 5GHz radio on the IoT SSID.

ESXi Networking #

One of the most fundamental features that I want for the homelab is to allow VMs to connect to different VLANs. Having connectivities in such a way allows me to host a mixture of presonal (e.g. Plex media server) and lab workloads on a single ESXi host, without worrying that they might interact with each other.

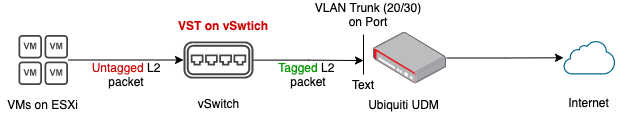

The proper way to do it is through VLAN tagging, which adds an 802.1Q VLAN tag the VMs traffic before it reaches the router. This can be implemented as Virtual Switch Tagging (VST) on ESXi (see the traffic flow below).

Data flow of VMs in the ESXi cluster

The idea is rather simple. Firstly, set the port profile on UDM to be a VLAN trunk that accepts both VLAN 20/30 traffic. On ESXi, create two or more vSwitches and assign a VLAN ID to each one. Before a VM is created, mount the desired vSwitch so the VM will be placed in the underlying VLAN. When the VM starts, the OS sends an DHCP query to the router. Depends on which VLAN the query comes from, the router should assign an IP in the corresponding VLAN IP range.

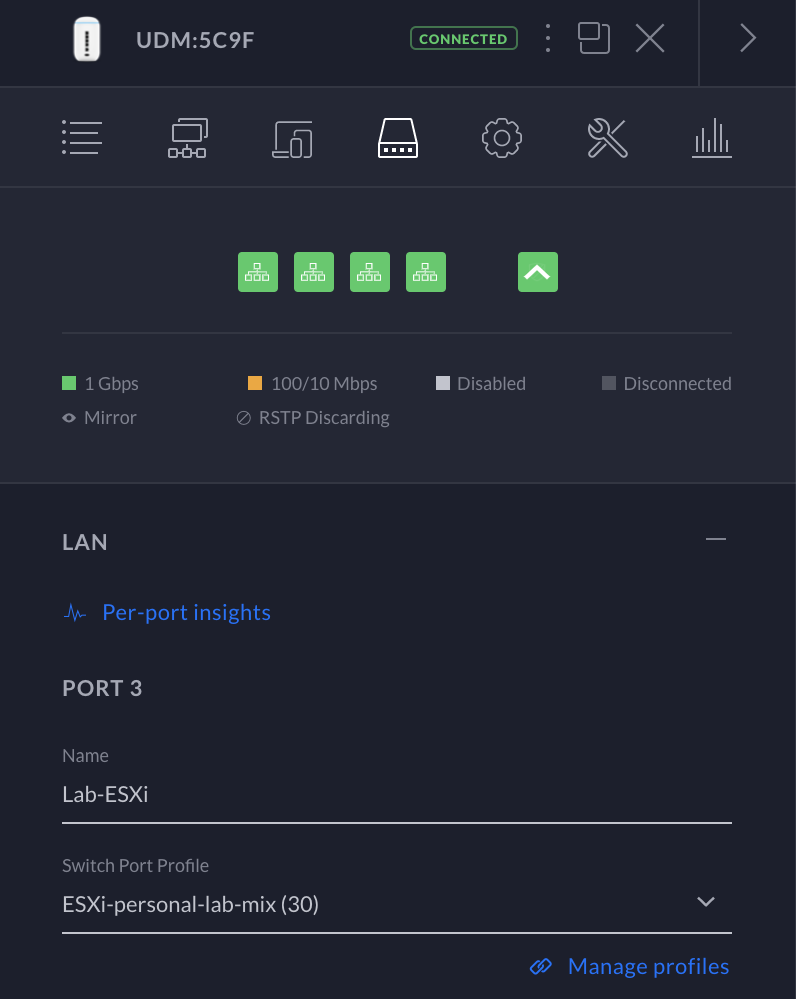

Configure the port profile on UDM to allow VLAN trunk

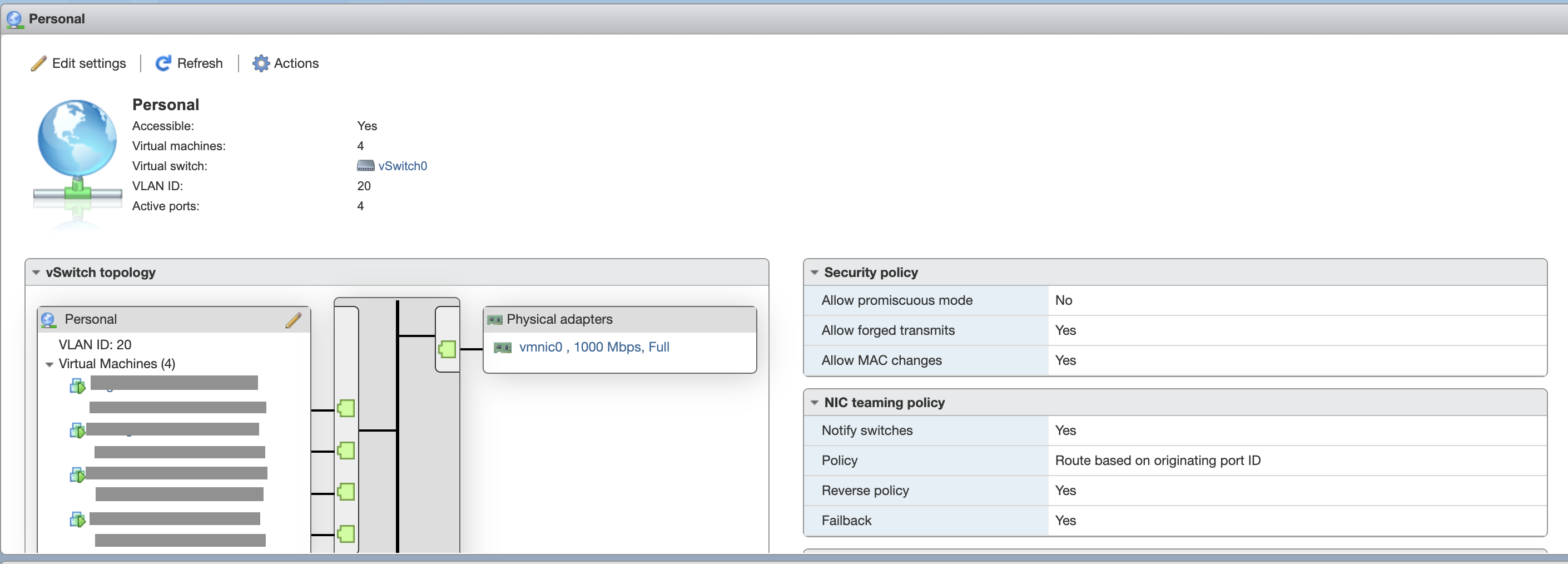

The vSwitch that maps to the Personal VLAN (20)

Security Add-ons #

Ubiquiti has many strengths, but certainly not security. Besides the catastrophic data breach in March 2021, Ubiquiti struggled to deliver some of the security features they made as their major selling points. The current UDM product line offers a basic firewall without hit count, a toy IDS/IPS operates as a black box, a DPI shows incorrect traffic stats and a toy honeypot that only detects HTTP connections on port 80. I’m not sure if I’d been too harsh to a $500 device, but they barely worked even in a homelab environment.

Servers and Services #

ESXi + vCenter #

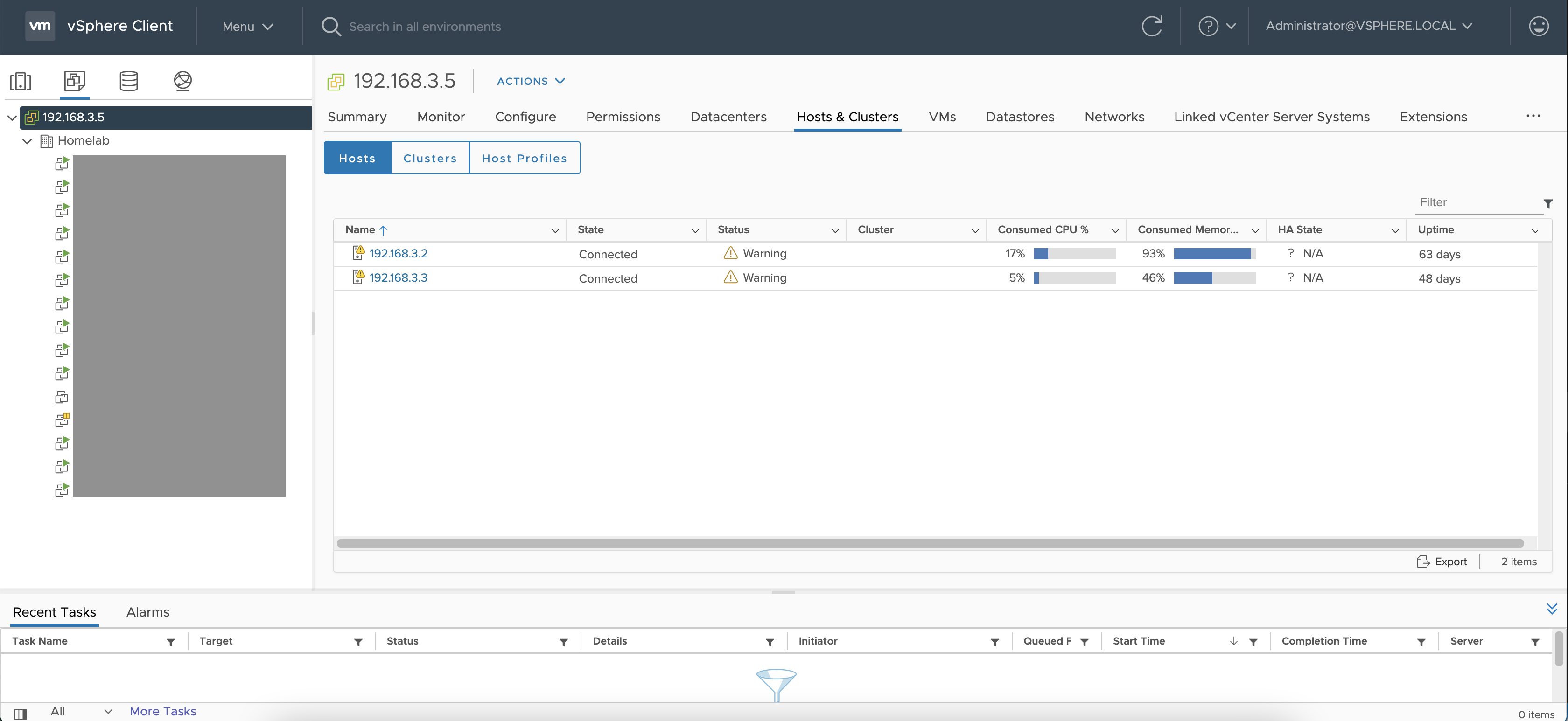

The upgraded homelab now has two servers (NUC + HPE Gen10 Plus), each running as an ESXi host, and the whole ESXi cluster is managed by a vCenter instance running on Gen10. Being able to manage two lab servers on one web console is a huge efficiency boost for me. vCenter just made VM provisioning, migration, cluster upgrade a lot easier to deal with.

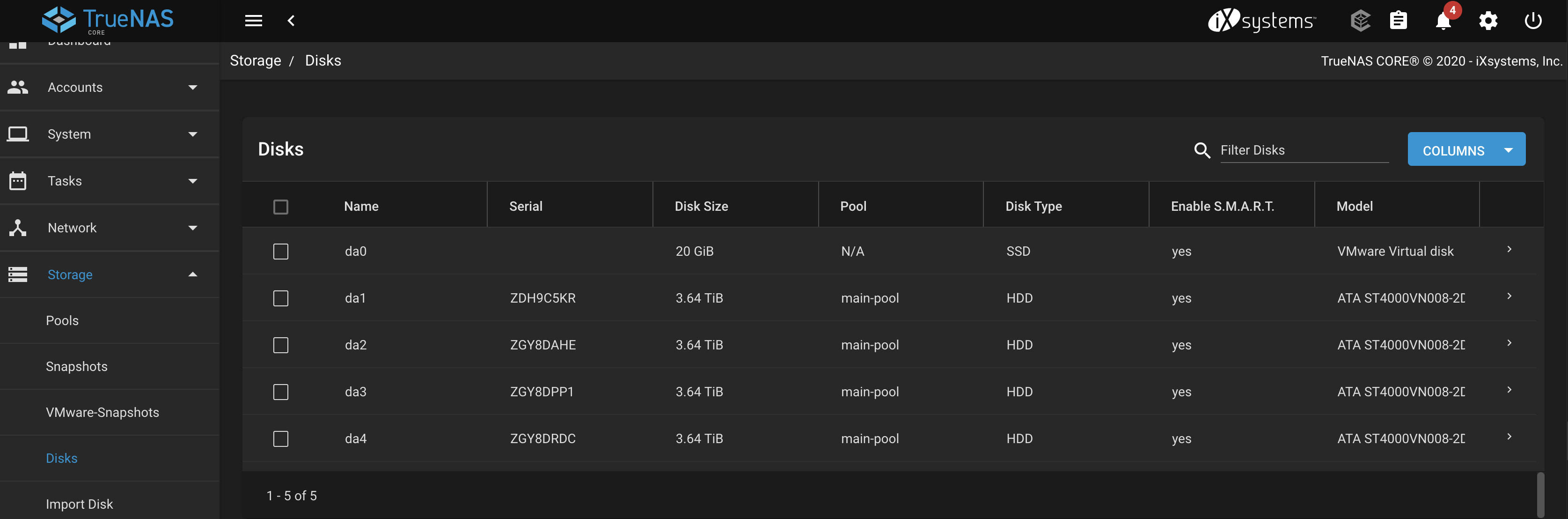

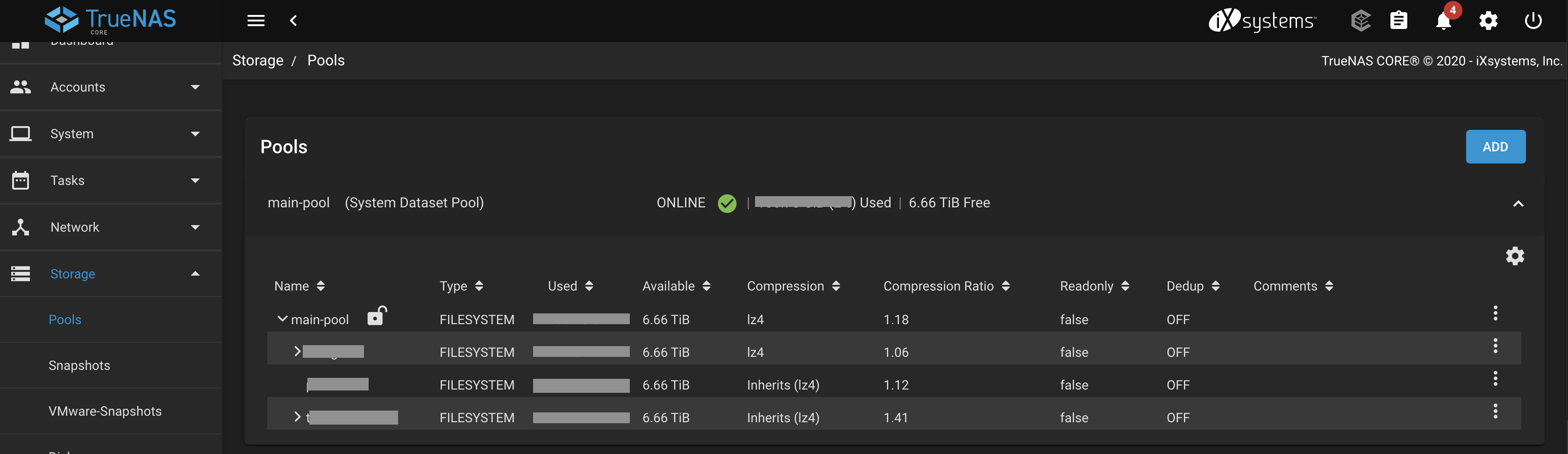

TrueNAS #

Thanks to the additional 4 disk bays that come with Gen10 Plus, I could finally build a NAS. However, I didn’t want to dedicate the whole machine to a native TrueNAS install which seemed waste such a nice server. A naive solution would be to make a VMDK in ESXi first, then to mount the virtual disks in the VM to build a ZFS pool. I didn’t like the idea either: having two layers of file systems could make disk recovery a real disaster, not to mention the performance penalty in VMDK.

Another seemingly legitimate solution is to deploy TrueNAS as a VM on top of the ESXi. However if doing so, the TrueNAS VM will be cut off from the direct block access to the HDDs and therefore unable to build a ZFS RAID natively. What if I pass the SATA drivers through just like a normal GPU PCIe passthrough? I followed the lead of this idea and found there was actually a way to do it. Despite VMWare not having out-of-the-box support on SATA passthrough, one can create a Raw Device Mapper(RDM) and attach the SATA HDDs to it, then create a SCSI controller in the VM to attach the RDM as an existing HDD. The trick worked perfectly in TrueNAS.

iSCSI SAN for Scalability #

One of the determining factors for me to choose TrueNAS was its support for the iSCSI protocol. iSCSI is a storage protocol that provides block-level access over a TCP/IP network. In human’s language, I can use iSCSI to mount a ZFS volume to another ESXi host and make it appear like a local hard disk. This allowed me to create a vSAN-equivalent storage layer abstraction that made storage an independent part of the homelab. Should I ever want to add in more lab servers, I can simply buy a beefy CPU and an abundant of RAMs, and attach an iSCSI target as the data storage. As long as I don’t have a heavy I/O workload (unlikely), the homelab should be able to scale horizontally.

Remote Access #

I’m using Tailscale VPN for remote access. In particular, I’ve created a Linux jump host and installed a Tailscle agent on it. The agent is configured to forward traffic to a few designated subnet prefixes. I then configured a few firewall rules to further restrict which part of the network this jump box can access.

The Tailscale team had really done a fantastic job. I found it particularly suitable for the homelab’s use case because

- Tailscale is based on Wireguard. And Wireguard is fast, secure, and stable even when the link state changes (e.g. from 4g to WiFi)

- The native wireguard doesn’t work well with NATs (who doesn’t have NAT these days). Tailscale solved the NAT traversal pain point by using a dozen of NAT traversal techniques. If none of that works, Tailscale falls back to a global relay server to establish a temporary connection first and use that to bootstrap a wireguard tunnel. Once the tunnel is established, Tailscale will silently handover the traffic back to wireguard.

- Because Wireguard is point-to-point, there’s no traffic/QoS limit. This especially helps to reduce latency and improve the throughput when I’m travelling within the city. Whether I’m in the office/coffee house/trains, my ssh/RDP/internal web service/SMB sessions were as butter smooth as I was in my home.

Projects in Homelab #

Many have asked me the same question: “Your homelab looks cool. But what for?” VMs come and go, but if I have to summarise, below are some of the things that are running or used to run:

- Malware lab behind pfsense

- Standard Kubernetes cluster with three nodes

- ELK lab to test detection ideas

- VMWare lab to play around with ESXi/vCenter

- VMs for remote development

- VMs of different OS versions for exploit developement

- Disposable VMs for running privacy-intrusive software

- Kali from-any-where

- Mobile pentest VM to run Linux specific tools (use VMRC to attach the phone)

- Private cloud storage, e.g. SMB sharing, time machine backup

- Personal services, e.g. adguard DNS, torrenting, NextCloud, Plex, Grafana etc.

Surely some of them can be run on a laptop/PC. But having a homelab and being able to access it at any time gives me a lot more flexibilities. I get instant access to different environments that I can quickly experiment with, set up complicated architectures without worrying about laptop resources, and quickly validate/pentest my ideas wherever I go, as long as there’s Internet.

Epilogue #

Well, that pretty much concludes a busy homelab upgrade season. I guess I have to say I’m quite satisfied with the current setup so it should last for a while. The size of the cluster is at a sweet spot now which allows me to still have fun but not spending too much time on the maintenance. What’s the biggest lesson that I learnt? Homelab is a place to embrace quick-and-dirty works. Make projects moving is much more rewarding than having an impeccable Ansible script or a flawless production-grade setup.